Musk, DOGE, and the phantom promise of AI

General programming language topics

Musk, DOGE, and the phantom promise of AI

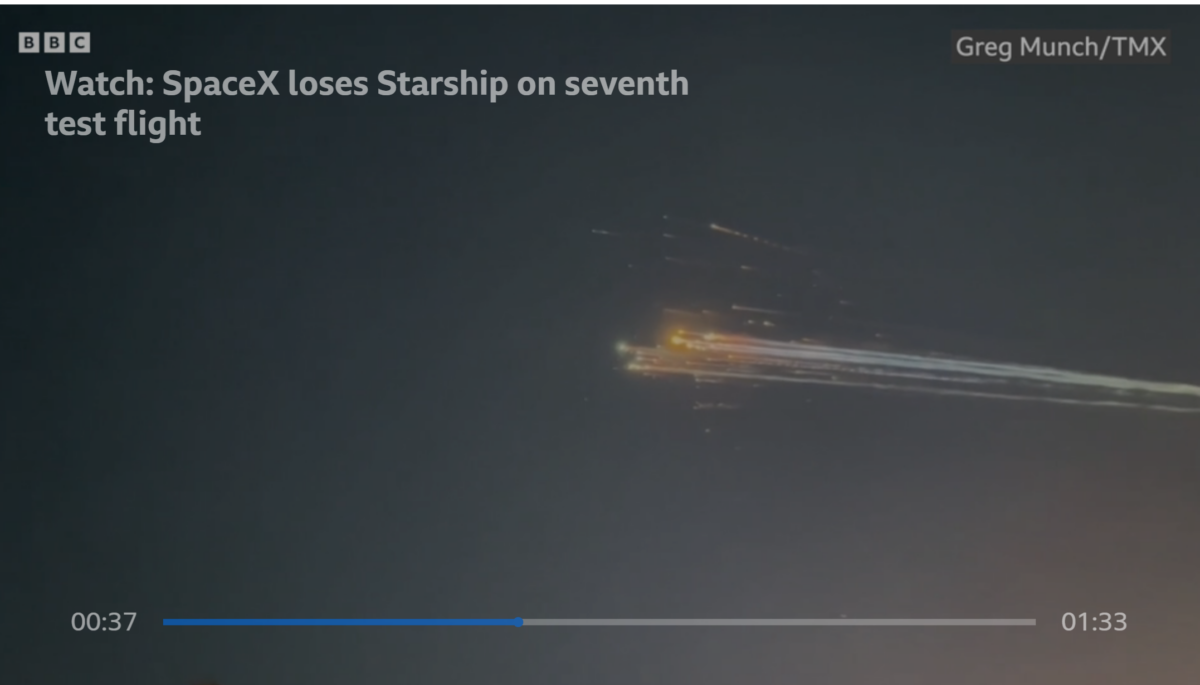

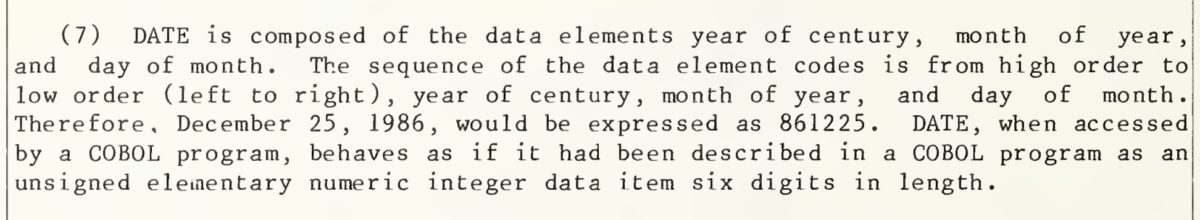

No, the Social Security ‘system’ did not default to a date of May 01, 1875 when a date is missing.

A post by Karl Martino reminded me of Jeff Atwood’s We are typists first, programmers second. Atwood was responding, in hearty agreement, to a post by Steve Yegge, who wrote I was trying to figure out which is the most important computer science course a CS student could ever take, and eventually realized it’s Typing 101. The really […]

Recovered from the Wayback Machine. I didn’t know the ?> closing tag was optional with PHP code only files, either. I did know about white space following the end tag. Probably every PHP developer knows about the white space following the end tag problem. What header? What ******** header!? Other useful stuff on PHP best practices at […]

I’ve written about this previously, but worth repeating. CSS can be dynamically created using a PHP application, as long as the content type is set to CSS: <?php // declare the output of the file as CSS header(‘Content-type: text/css’); ?> The style sheet can then be used directly or imported into another: @import “photographs.php”; I use […]